My A1 robot is a robot based on a wheelchair. The robot’s purpose was to do telepresence. I built this thing 12 years ago and is collecting dust since then. I could not make it work at that time because of the lack of affordable technology, software possibilities, and my skillset.

A lot has changed now. The widely available electronics and the amount of cheap computer power give much more functionality out of the box. I want the robot to be a platform capable of driving around autonomy and doing image-recognizing tasks like detecting garbage and faces.

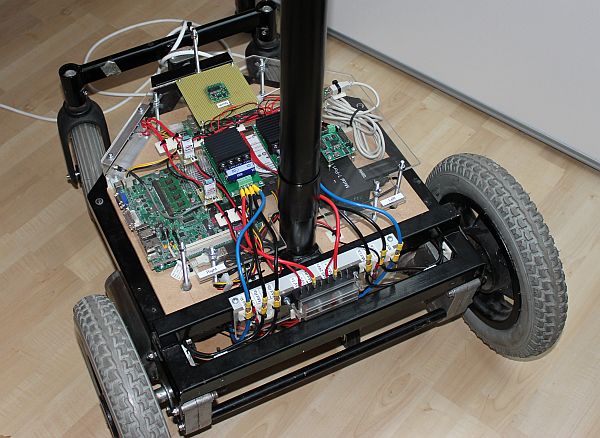

The old electronics on this robot, like an Intel Mini ITX D945GSEJT and a servo driver, had to go. I ripped the electronics to make room for new components. I have already replaced the pulverized old tyers. The plan is to reuse the wheelchair base, motors, and motor drivers (MD03 from Devantech). The 24 Volt power supply is also staying for testing now. A Teensy 4.1 will do the low-level control, like handling the motor drivers and reading sensor data. This controller has many inputs and outputs and serial, SPI, CAN, and I2C ports.

I haven’t decided what single-board computer I will use to do higher-level control. A prominent candidate is the NVIDIA Jetson Nano or better. It’s hard to get one because of a chip shortage. Another option is to use one or more Raspberry Pi boards and connect them with ethernet. The Teensy will use a serial connection to transfer data between a Raspberry Pi or the Jetson. This configuration makes it possible to build a modular system and allows swapping components when better components are available.

The first task is to make the robot drive remote-controlled. I build a remote control for this with a Bluetooth module. There will also be a Bluetooth module connected to the Teensy on the robot. The motor drivers will be connected through I2C, making it possible to read data from the driver like current use and temperature.

Leave a Reply